Predicting New Product Success with AI

The Uncomfortable Truth About NPD Investment Decisions

If you’ve sat through enough gate meetings, you know the routine: project teams present their business cases, executives ask probing questions, discussions ensue, and decisions get made. But here’s the uncomfortable truth that research reveals: approximately 80% of the positive new product investment decisions turn out to be wrong! Either the approved projects fail commercially, or they get canceled after substantial resources are spent [1].

Think about that for a moment. A coin toss would yield better results!

Recent studies paint an even grimmer picture. The Innovation Research Interchange (IRI) found that only 41% of approved projects ultimately meet their profit targets [2]. Meanwhile, 85% of businesses lack a clear process for terminating underperforming projects, creating pipelines clogged with “zombie projects” that stumble forward but never truly succeed [1].

Why Stage-Gate Alone Isn’t Enough

The Stage-Gate® process revolutionized new product development by specifying best practices for each development stage and building in decision points based on research into what separates winners from losers. Yet despite widespread adoption of Stage-Gate, the portfolio management decision—selecting and prioritizing projects and allocating resources—remains problematic. The challenge isn’t that companies lack frameworks. It’s that human decision-makers struggle with the very nature of NPD decisions: high uncertainty, limited data, and competing pressures [3]. That’s where Moneyball comes into play!

The Human Factor: Why We Get It Wrong

Nobel Prize winner Daniel Kahneman, the founder of behavioral economics, showed how far actual managerial behavior departs from the rational-actor assumptions of classical economics. He demonstrated that people systematically misjudge probabilities, mis-handle uncertainty, and overestimate their predictive accuracy—precisely the challenges at the heart of new product go/kill decisions. [4,5].

In high-uncertainty, high-commitment contexts like pre-development go/kill decisions, biases are predictable, not accidental [6]:

• Confirmation bias: hearing only information that confirms what you already believe.

• Overconfidence bias: systematically overrating forecast accuracy and underestimating downside risk.

• Availability bias: relying on vivid accounts of recent successes or failures when judging a new project.

• Context bias: being swayed by the fluency and confidence of the pitch rather than the quality of evidence.

• Law of small numbers: treating a handful of interviews or a few customers as if they were statistically

representative.

• Escalation of commitment: continuing to invest in underperforming projects to justify prior decisions and sunk costs.

The lesson for R&D and marketing leaders is not that managers are irrational, but that our cognitive architecture is mismatched to the complexity and uncertainty of modern innovation decisions.

Learning from Moneyball: When Data Beats Intuition

The 2003 book and subsequent film Moneyball told the true story of how the Oakland Athletics baseball team used statistical analysis and data-based algorithms to select players in the early 2000s. These algorithms outperformed traditional scouts and experts in choosing which players to sign, fundamentally disrupting how professional sports teams today make personnel decisions [7,8].

The parallel to NPD is striking. Like sports teams evaluating talent, managers must predict future performance under uncertainty. Yet while professional sports embraced data-driven decision tools decades ago, most NPD organizations still rely heavily on intuition and informal processes [1].

The contrast with financial services is even more illuminating. In the financial sector, AI has become transformative in investment decision-making [9]:

• AI-driven trading accounted for over 40% of hedge fund trading volume in 2024.

• Hedge funds leveraging AI outperformed their peers by an average of 12% annually.

• A rigorous study of mutual funds found that those with the highest AI usage delivered annual excess returns of 1.56% compared to low-AI funds. One Hong Kong venture capital fund even credits an algorithm called “Vital” with pulling it back from the brink of bankruptcy.

Why has the financial sector embraced AI for investment decisions while NPD managers remain reluctant? A 2024 study found that no firms in the U.S. or Europe had entrusted NPD investment decisions to AI, nor do they plan to in the foreseeable future [10]. The findings suggest managers either lack trust in AI for these critical decisions, or believe they can outperform any AI-algorithm.

AI-PRISM: An AI-Developed and Operated Predictive Model

There is a solid middle path—using AI not to replace human judgment, but to enhance it with data-driven insights? This is where AI-PRISM (Product Risk and Innovation Success Metric) enters the picture.

AI-PRISM was created through an innovative process: AI itself (specifically Perplexity Pro) reviewed extensive research on new product success-versus-failure factors and developed a predictive model based on what it uncovered [11]. AI analyzed dozens of research articles and existing scoring models to identify the most important factors separating winners from losers.

The resulting model includes seven key factors consistently identified as critical to new product success, and is shown in Figure 1. Each factor itself is based on 2–3 sub-factors, also weighted (20 sub-factors in total, too many to show).

Figure 1: The AI-PRISM model with 7 key factors that drive new product success. The 7 factors are in turn comprised of 20 sub-factors.

Here’s how AI-PRISM works [11]:

1. Data Ingestion: The project team uploads the available project description and data—similar to the contents of a standard business case—covering product details, market analysis, technology, and financials.

2. Autonomous Analysis: AI-PRISM (leveraging Perplexity Pro) reviews the internal data and autonomously seeks additional information from external online sources to bridge any knowledge gaps and perform a comprehensive evaluation.

3. Scoring and Quantification: The model evaluates the project using PRISM’s 20 sub-questions, calculates the seven factor scores, and determines the overall project score.

4. Strategic Rationale: Beyond simple scoring, the model provides a detailed rationale for its evaluation, assesses market prospects, identifies key risks, and suggests mitigation strategies.

5. Final Recommendation: AI-PRISM generates a specific strengths and weaknesses assessment, calculates a probability of commercial success, and issues a final recommendation (Go/No Go) accompanied by a list of next steps.

To illustrate the system in action, consider VivaFlow Go, a reusable, insulated water bottle with a modular lid that accepts snap‑in flavor and function pods (electrolytes, vitamins, natural flavors), plus a low‑energy sensor that tracks how much the user drinks each day. It connects via Bluetooth to a simple companion app that shows hydration, provides gentle reminders, and can suggest pod combinations based on activity level, weather, and personal goals.

The project belongs to AquaPure Industries, a mid‑sized US manufacturer that currently produces traditional reusable water bottles (plastic and stainless steel) for retail and promotional markets. The firm has strong capabilities in bottle design, materials, insulation, and large‑scale manufacturing, plus established relationships with major retail chains and online marketplaces.

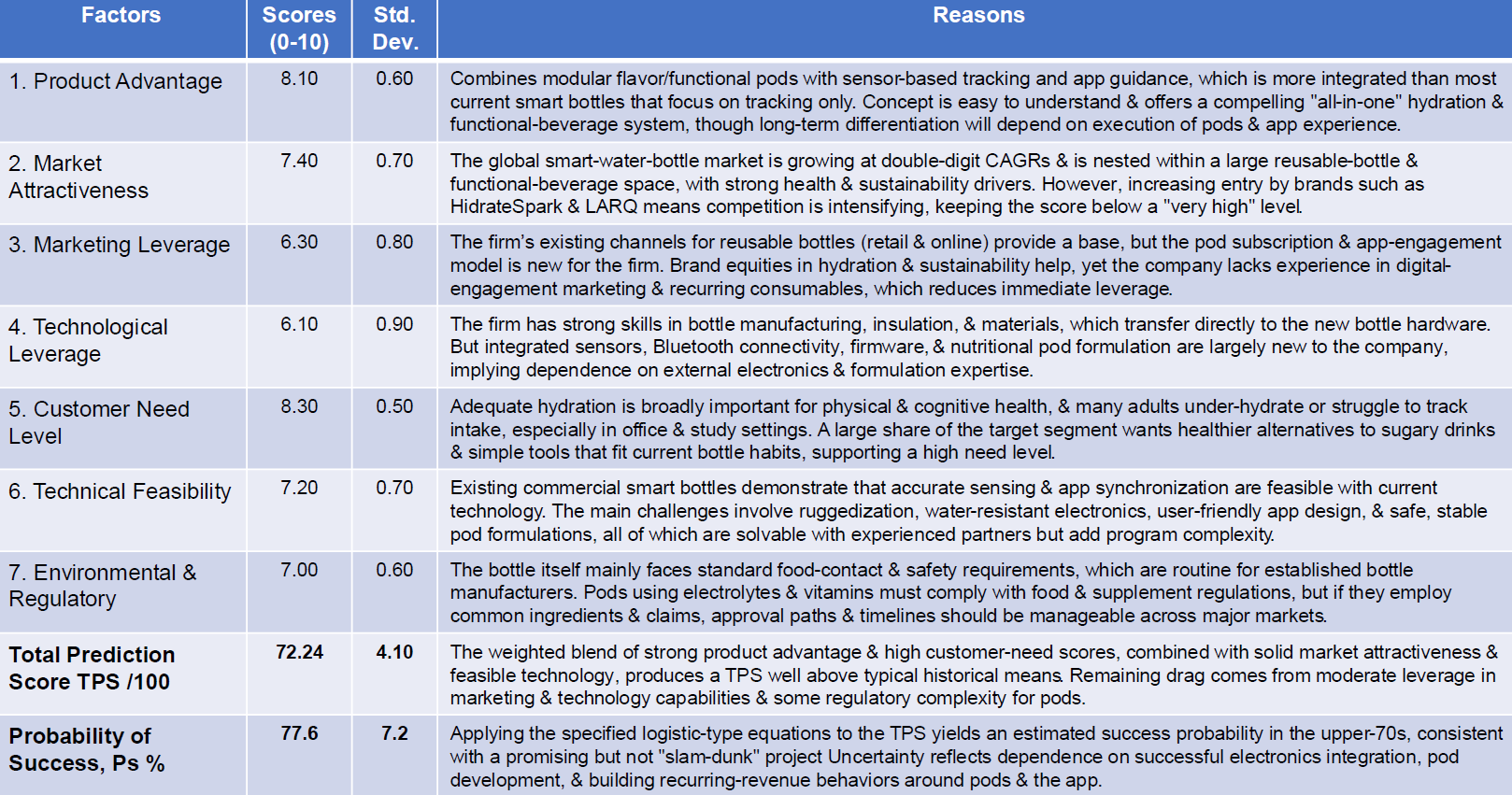

The project team submitted their preliminary business case, including market, technical, and financial analyses, to AI-PRISM for evaluation. AI-PRISM analyzed the submission, sourcing external data to fill information gaps. It scored the project on the 20 sub-factors, determined the seven factor scores, and generated a total prediction score out of 100. Algorithms then converted this score into a probability of commercial success (see Table 1).

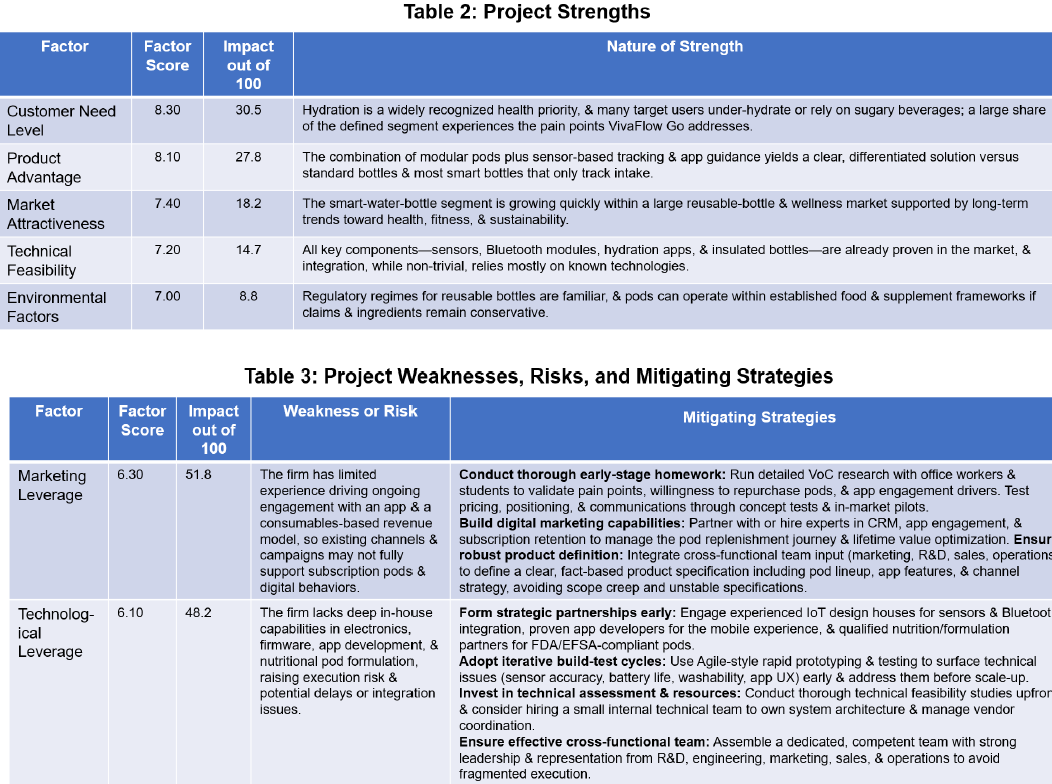

The model also conducted a detailed qualitative review (see Tables 2 and 3 for the strengths and weakness assessments). AI-PRISM recommended a “CONDITIONAL GO – Proceed to next development stage with mandatory conditions,” and outlined specific key actions.

The detailed 9-page AI-PRISM report concluded with: “On the evidence and scoring, the project merits a Conditional Go: proceed to the next stage with clear, mandatory conditions around securing capable, experienced partners for electronics, app, and pod formulation; running robust Voice-of-Customer research and iterative concept/prototype testing; demonstrating attractive pod-repurchase and app-engagement metrics in limited pilots before full-scale launch commitments; completing regulatory path assessment and formulation feasibility; and assembling a dedicated, cross-functional team with strong leadership and adequate resources”.

AI-PRISM also provided an appendix containing detailed market, VoC, competitive, and technical assessments to assist the project team in refining their business case.

But Does AI-PRISM Really Predict NP Success?

Validity: In many prediction settings, model validation involves waiting months or years to observe actual outcomes and then comparing those outcomes with the model’s predictions. This type of longitudinal validation is not practical for AI‑PRISM, because (1) it may take five or more years before the commercial results of many new products are known, and (2) some projects will be terminated or lost before launch, so their eventual success or failure will never be observed.

Instead, we adopted a “second opinion” approach, similar to practices in medicine when definitive outcomes are delayed or uncertain [12]. Specifically, we submitted the same 13 projects to an entirely different AI model (DeepSeek, a Chinese-developed model) and compared its predictions with those from AI‑PRISM.

When plotted against each other, the two sets of predictions exhibited an almost perfect linear relationship, with an R² of 96.8%, indicating that the two models, despite their different architectures and origins, produced nearly identical assessments of project success potential [13]. Conclusion: The “second opinion” appears to validate AI-PRISM.

Reliability: AI-PRISM’s scores were quite consistent over multiple runs for the same project (a standard deviation averaging 5.7%). For all 13 projects, AI-PRISM was given the same information from the project teams for each run, but could also access information online, hence some variability. The conclusion: high consistency and reliability for AI-PRISM.

By contrast, when human managers’ ratings were investigated, their scores per project were quite scattered.* Despite being seasoned managers, there was high variability amongst the managers’ scores for the same project (a standard deviation of 17.5%, almost 3X the model’s).

* Groups of 5-8 managers had previously scored the 13 projects as gatekeepers.

Additionally, managers were less discriminating between good and bad projects, with their total scores more clustered around the mean of 65 out of 100. Finally, managers’ scores did not correlate well with either DeepSeek’s or Perplexity’s scores (R² of 32% and 28% respectively).

The results suggest that these managers’ assessments were less reliable, less discriminating, and less valid than AI-PRISM’s predictions [13]!

Information Source Quality: Beta users of AI-PRISM raised a critical concern: not all information sources are equally reliable. AI-PRISM now addresses this by using the Source Quality Rating Framework, developed with help from AI, which weights sources by credibility:

• Primary Sources: data generated by the target company or regulatory bodies; e.g., patent databases, or product certifications (weight: 1.0).

• Professional Sources: trusted market research, analyst briefings, and regulatory/industry body reports; e.g., Gartner Magic Quadrant or McKinsey reports (weight: 0.9).

• Established Analysis: reviews and analysis from respected business journals and academic publications (weight: 0.9).

• Trade/Business News: timely, reasonably vetted information, such as high-credibility trade outlets and magazines (weight: 0.7).

• Industry Analysis: well-known blog authors, association white papers, and presentations at conferences (weight: 0.5).

• General Web: unvetted or popular sources, including mainstream online commentary (weight: 0.4).

• Unofficial Sources: blogs or YouTube; least reliable; often lacking attribution or responsible editorial oversight (weight: 0.2).

This systematic weighting ensures predictions rest primarily on authoritative information while avoiding “garbage in, garbage out” problems.

Simulated Multiple Runs: When using any measurement tool, the reliability check usually involves taking multiple readings to check for consistency. However, because AI accesses the almost same sources each time, repeated runs produce artificially identical results.

To provide a realistic consistency check, AI-PRISM simulates the range of scores that five independent experts would likely assign, based on how clear the evidence is and how novel the project is [13].

Practical Applications for Your Organization

AI-PRISM doesn’t make go/no-go decisions—humans still retain that critical role. Instead, it serves two vital functions:

1. Reality Check for Project Teams: Project teams are often overly optimistic about their projects. By conducting an AI-PRISM analysis and inviting knowledgeable outsiders to participate, teams can identify strengths and weaknesses they might have overlooked.

2. Enhanced Decision Support at Gate Meetings: Management can integrate AI-PRISM into decision meetings as an “additional evaluator” alongside human decision-makers. AI-PRISM’s strength/weaknesses assessment promotes active, intelligent discussion at the gate review; further, the probability of success from AI-PRISM can then feed into the Expected Commercial Value (ECV) calculation [14]:

ECV = Probability of Success × Present Value of Future Earnings – (Development Cost + Commercialization Cost)

This ECV provides a more realistic estimate of a project’s economic value by building in risk and correcting for the tendency of financial projections to often exceed reality.

The Path Forward

The financial sector has demonstrated that AI can outperform humans in making investment decisions under uncertainty. Professional sports showed that data-driven algorithms beat expert intuition in talent selection. The question for NPD leaders is no longer whether AI can help improve decision-making—it’s how quickly you’ll adopt these tools before your competitors do.

AI-PRISM represents a practical middle path: leveraging AI’s analytical power and freedom from cognitive bias while keeping humans firmly in control of final decisions. It’s Moneyball for new product development using data and algorithms to make smarter bets on which projects will succeed.

The stakes are too high, and the error rates too significant, to continue relying solely on intuition for decisions that determine your company’s future. It’s time to bring the power of AI to your next gate meeting.

About the Author:

Dr. Robert G. Cooper is Professor Emeritus at McMaster University’s DeGroote School of Business (Canada); ISBM Distinguished Research Fellow at Pennsylvania State University’s Smeal College of Business Administration; Honorary Advisor, Snyder Innovation Management Center, Syracuse University; and a Crawford Fellow of the Product Development and Management Association (PDMA). Bob is also recent winner of the 2025 Global Lifetime Scholar Award for “Management of Product Innovation” (ResearchGPS: https://scholargps.com/).

Bob is the creator of the popular Stage-Gate® process model, now the most popular idea-to-launch NPD process globally (for physical product firms). He is also co-founder of Stage Gate International Inc. Bob has published 12 books – including the “bible for NPD”, Winning at New Products, and more than 160 articles on the management of new products, including 17 refereed articles on “AI in NPD” in 2023–26. He has won the IRI’s (Innovation Research Interchange) prestigious Maurice Holland Award three times for “best article of the year”.

Bob has helped hundreds of firms over the years implement best practices in product innovation, including many Fortune 500 firms. Cooper holds Bachelor and Master’s degrees in chemical engineering from McGill University in Canada; and a PhD in Business and an MBA from Western University, Canada.

Website: www.bobcooper.ca

Contact: robertcooper@cogeco.ca

Endnotes & References:

1. This Global PDMA study of best practices is one of a series of studies done over several decades. The research shows the “attrition curves” of new product ideas and projects over time, and allows the computation of success rates at different points in a project. See: Knudsen, M. P., M. von Zedtwitz, A. Griffin, and G. Barczak. 2023. “Best practices in New Product Development and Innovation: Results from PDMA’s 2021 Global Survey.” Journal of Product Innovation Management (40)3:257–275. https://doi.org/10.1111/jpim.12663

2. This article is a report of the IRI US study into Portfolio Management Practices in NPD: Cooper, R. G., M. Desai, L. Green, and E. J. Kleinschmidt. 2024. “Strategies to Improve Portfolio Management of New Products.” Research Technology Management 67(1): 55–66. https://doi.org/10.1080/08956308.2023.2277992

3. Mitchell, R., R. Phaal, N. Athanassopoulou, C. Farrukh, and C. Rassmussen. 2022. “How to Build a Customized Scoring Tool to Evaluate and Select Early-Stage Projects.” Research-Technology Management 65(3): 27–38.https://doi:10.1080/08956308.2022.202 6185

4. Kahneman, Daniel. 2002. “Maps of Unbounded Rationality: A Perspective on Intuitive Judgement and Choice.” Nobel Prize Lecture, December 8. https://www.nobelprize.org/uploads/2018/06/kahnemann-lecture.pdf

5. Kahneman, Daniel. 2011. Thinking, Fast and Slow. New York: Macmillan.

6. Tversky, Amos, and Daniel Kahneman. 1974. “Judgment under Uncertainty: Heuristics and Biases.” Science 185(4157): 1124–1131. https://doi.org/10.1126/science.185.4157.1124

7. Lewis, Michael. 2003. Moneyball: The Art of Winning an Unfair Game. New York: W.W. Norton & Company.

8. Lewis, Michael. 2016. The Undoing Project: A Friendship That Changed Our Minds. New York: W.W. Norton & Company.

9. More detail on the use of AI in financial trading and investing, along with references, is available in: Cooper, R.G. 2025. “AI-PRISM: A New Lens for Predicting New Product Success,” PDMA kHUB 2.0, February 28. Link: AI-PRISM: A New Lens for Predicting New Product Success – Knowledge Hub 2.0

10. Cooper, Robert G., and Alexander M. Brem. 2024. “Insights for Managers About AI Adoption in New Product Development.” Research-Technology Management 67(6): 39–46. https://doi.org/10.1080/08956308.2024.2418734

11. The beta version of AI-PRISM has been described in three articles. The current version of AI-PRISM has been improved, notably regarding data source quality and output reliability, based on user feedback from the beta tests. See: Cooper, endnotes [9] and [13]; also: Cooper, Robert G. 2025. “From Intuition to Insight: Leveraging AI to Forecast New Product Success,” IEEE Engineering Management Review, Early Access, April 14, 1–10. DOI: 10.1109/EMR.2025.3559777 Link:http://www.bobcooper.ca/images/files/articles/5/AIForecastsNPSuccessIEEEEMR.pdf

12. Carrasco-Ribelles, LA, Llanes-Jurado, J., Gallego-Moll, C., Cabrera-Bean, M., Monteagudo-Zaragoza, M., Violán, C., and Zabaleta-Del-Olmo, E. 2023. “Prediction models using artificial intelligence and longitudinal data from electronic health records: a systematic methodological review,” J Am Medical Inform Assoc. Nov. 17; 30(12): 2072–2082. https://doi.org/10.1093/jamia/ocad168. PMID: 37659105; PMCID: PMC10654870

13. Cooper, Robert G. 2025. “A New AI Model Shows Promise in Predicting New Product Success.” Research-Technology Management 68(4): 51–59.https://doi.org/10.1080/08956308.2025.2496109

14. Cooper, R. G. 2023. “Expected Commercial Value for new-product project valuation when high uncertainty exists.” IEEE Engineering Management Review 51(2): 75–87. https://doi.org/10.1109/EMR.2023.3267328